Hi all,

Got a rather colossal post here. Apologies if it’s too much for one.

I’m in the early stages of implementing CsoundUnity into my 3D Unity game.

I’m in the process of working out a most efficient and modular workflow for managing instruments in the game world. As this is new to me, i’m not sure what approach would be best when handling many different instruments, or how to organise CsoundUnity components sensibly.

I’d love to know how others go about managing their own audio systems in large game worlds that requires many different instruments. If you can spot anything in my current approach that seem counterintuitive, i’d love to hear it.

Context:

-

The game will have many different instruments for all manner of sounds.

-

The game has a Player, and multiple NPC’s that walk around.

-

For now, i’m just testing a footstep instrument, which is triggered through Animation Events, before I move onto further things. I’ve encountered some issues in getting this working for NPCs in particular.

Setup:

The scene hierarchy contains:

- 1x central CabbageAudioManager script

The Player and NPC prefabs contain:

- 1x Audio Source

- 1x CsoundUnity component

- 1x Custom instrument sound manager script (AudioFootStepManager).

The Player contains 1x Audio Listener component.

This setup is just early testing purposes, i’m aware it’s going to be pretty awful for performance to have so many CsoundUnity components all existing at the same time, as there is a relatively high density of NPCs. I haven’t figured a solution to this yet. Can I do more with less somehow?

CabbageAudioManager Script

In the hierarchy I have a single AudioSFXManager GameObject that contains a custom CabbageAudioManager script, which acts as a central manager responsible for setting instrument data passed into it by all instrument manager scripts. I use a static instance of this class for shared access of all instrument manager scripts.

CabbageAudioManager Example:

public class CabbageAudioManager : MonoBehaviour

{

public static CabbageAudioManager Instance { get; private set; }

private void Awake()

{

if (Instance != null && Instance != this)

{

Destroy(gameObject);

}

else

{

Instance = this;

DontDestroyOnLoad(gameObject);

}

}

public void SetParameter(CsoundUnity cSound, string parameterName, float value)

{

cSound.SetChannel(parameterName, value);

//Debug.Log($"Parameter {parameterName} set to: {value}");

}

public void SetTrigger(CsoundUnity cSound, string parameterName, bool state)

{

string stateValue = state ? "1" : "0";

string triggerCommand = $"i\"{parameterName}\" 0 {stateValue}";

cSound.SendScoreEvent(triggerCommand);

//Debug.Log($"Trigger {parameterName} set to: {stateValue}");

}

}

AudioInstrumentManager Scripts

I would plan to use custom AudioInstrumentManager scripts as convention for all different kinds of instruments (AudioFootStepManager, AudioRainManager, AudioDialogueManager etc…) since all instruments will be unique in how their parameters are referenced/handled.

AudioFootStepManager is a script that interfaces with the Cabbage footstep instrument, updating it’s values based on surface types, walking speed etc…

It gets the CsoundUnity component attached to the GameObject on Awake and passes this through to our CabbageAudioManager for triggering/processing the instrument.

The footstep instrument is used for the Player and the NPCS.

It’s triggered through Animation Events for each step taken, via a function ‘TriggerFootstep’ in AudioFootStepManager.

AudioFootStepManager Example

public class AudioFootStepManager : MonoBehaviour

{

CsoundUnity cSoundObj;

private const string Instrument = "Footstep";

private const string GrassParam = "grassMix";

private const string WoodParam = "woodMix";

private const string StoneParam = "stoneMix";

private const string MudMixParam = "mudMix";

private const string WaterParam = "waterMix";

private const string SnowParam = "snowMix";

private int minVal = 0;

private int maxVal = 1;

private void Awake()

{

cSoundObj = GetComponent<CsoundUnity>();

}

private void Start()

{

// Instantly set ground material to grass initially

SetTextureInstant(maxVal, minVal, minVal, minVal, minVal, minVal);

// Make sure Steps, Drone and Sneak are disabled on Start. (Steps and Drone are utility modes for designing the instruments sound in Cabbage)

CabbageAudioManager.Instance.SetTrigger(cSoundObj, continuousStepsParam, false);

CabbageAudioManager.Instance.SetTrigger(cSoundObj, continuousDroneParam, false);

CabbageAudioManager.Instance.SetTrigger(cSoundObj, SneakParam, false);

}

// Instantly set the surface type values of the footstep instrument.*

public void SetTextureInstant(float grass, float wood, float stone, float mudMix, float water, float snow)

{

CabbageAudioManager.Instance.SetParameter(cSoundObj, GrassParam, grass);

CabbageAudioManager.Instance.SetParameter(cSoundObj, WoodParam, wood);

CabbageAudioManager.Instance.SetParameter(cSoundObj, StoneParam, stone);

CabbageAudioManager.Instance.SetParameter(cSoundObj, MudMixParam, mudMix);

CabbageAudioManager.Instance.SetParameter(cSoundObj, WaterParam, water);

CabbageAudioManager.Instance.SetParameter(cSoundObj, SnowParam, snow);

}

public void TriggerFootstep()

{

*// Trigger the footstep instrument, called by an Animation Event*

CabbageAudioManager.Instance.SetTrigger(cSoundObj, Instrument, true);

}

}

ISSUES:

----- Issue 1: -----

Error in the console

This error arises from the Player GameObject.

On Awake in play mode, the error log reads:

‘GameObject has multiple AudioSources and/or AudioListeners attached. While built-in filters like lowpass are instantiated separately, components implementing OnAudioFilterRead may only be used by either one AudioSource or AudioListener at a time.The reason for this is that any state information used by the callback exists only once in the component, and the source or listener calling it cannot be inferred from the callback. In this case the OnAudioFilterRead callback of script CsoundUnity was first attached to a component of type AudioListener on the game object Human after which a component of type AudioSource tried to attach it.’

Debugging efforts:

I have thoroughly checked it’s hierarchy and I definitely only have one AudioSource and one AudioListener on the Player. The NPCs also have only one AudioSource.

There are no other AudioListeners present. I have manually checked, and also searched for the type in the hierarchy, and the search confirms that there is only one on the Player GameObject. This hasn’t seemed to effect the sound however, the Player’s footstep sounds work fine.

----- Issue 2: -----

No footstep sound from NPC’s (EDIT: Resolved! See solution in further messages below)

The instrument itself is working fine for the Player. I’m hearing footsteps as triggered by the Animation Events and the instrument manager is indeed updating the instrument parameters correctly. Testing the button to trigger the footstep produces sound. All of this only seems to work for the Player.

I cannot hear anything on any NPCs, their CsoundUnity and AudioSource components are setup in the same way. However the instrument appears to be triggering correctly, I can see output in the NPC’s CsoundUnity meter as they walk, triggering the footstep. I just cannot hear them. Testing the button to trigger the footstep doesn’t produce any sound either.

Debugging efforts:

-

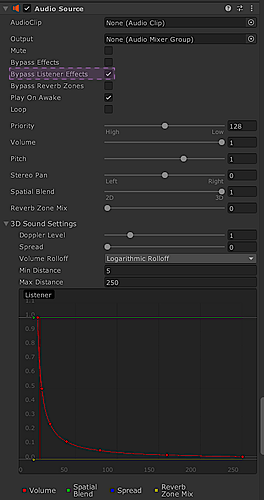

I checked the AudioSource’s min/max distances to ensure it’s set to a sensible distance, tried different values to ensure the sound wasn’t just being culled. This didn’t have any effect.

-

Ensured that the material type for the footstep instrument is set (Grass, Wood, Stone etc…). If this wasn’t set, then the instrument would be silent. It’s set to maxVal (1) in the Start method of AudioFootStepManager, and the inspector indeed reflects that this is true.

-

Enabled ‘Log Csound Output’ in CsoundUnity component to catch anything strange. It seems the instrument is being triggered, with ‘new alloc for instr Footstep’ appearing in the console for each step taken by the Player and NPCs (i’ve checked both in isolation). I did also see another message, appearing once for the Player and NPC: ‘insert_score_event(): invalid instrument number or name 0’. As I mentioned however, the footstep sound is working correctly for the Player so i’m not sure why this appears.

I’ve attached the current state of my WIP footstep instrument .csd also if this would help further.

FootstepSFX.csd (28.7 KB)

Would love anyones input on all this.

Thank you for reading!

Unity Version: Unity 2022.2.18

Cabbage Version: 2.9.98

CsoundUnity Version: 3.4.0