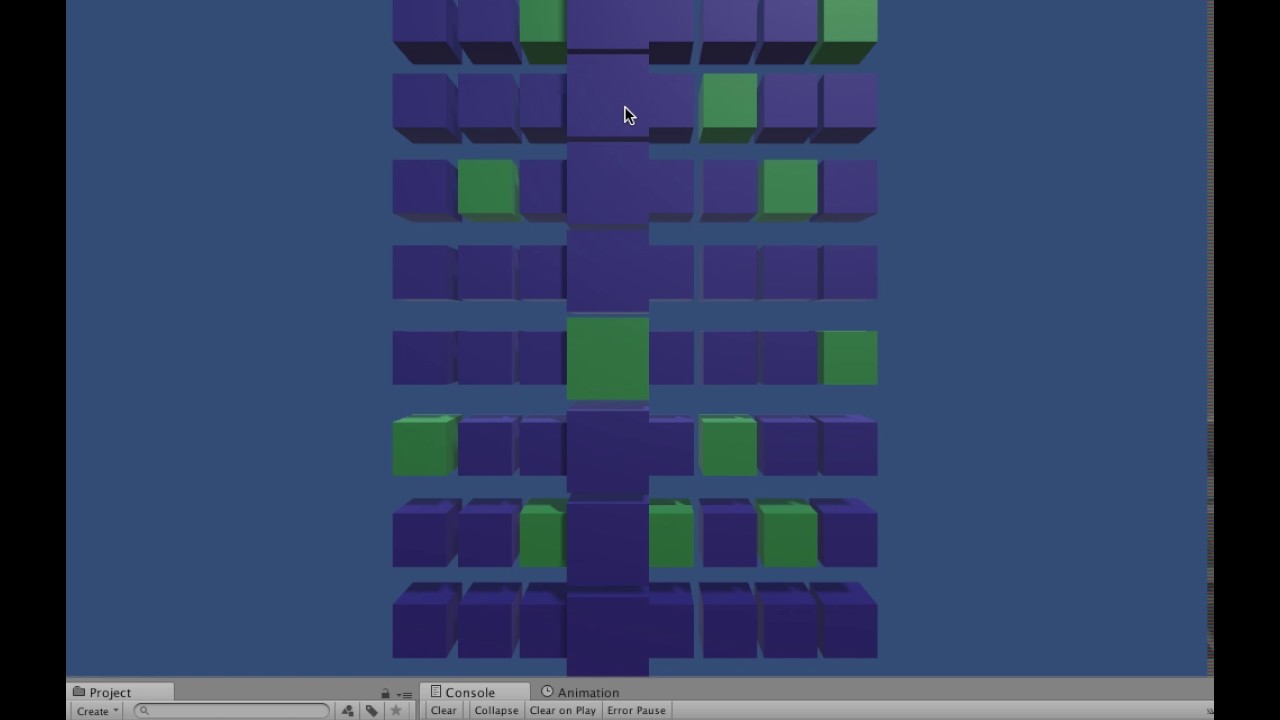

well actually we are talking about 8 8X8 sequencers - each 8x8 sequencer is a layer. these are all running synced to the main clock, and in tandem, and arranged on the z axis like a cube. the triggering will always go left to right within the grid, and instrumentation is basically set per layer. those controls are for one 8X8 layer.

then there’s the rotation aspect which i haven’t discussed. imagine being able to rotate the all the layers, arranged like a cube, as a cube. keep the layer assignment and sequence triggering exactly the same, but rotate the notes around . like in this movie:

(URL is a 200MB plus movie - here’s a download link that doesn’t result in a movie player inline: https:www.dropbox.com (and then) /s/5d3mbh3segi0kvv/constellation-prototype.mov?dl=0)

note this demo doesn’t feature note assignment or note editing in a layer, but its in process. so the takeaway here is i think the instruments would have to be separate from note data and timing, unless you put everything together in one huge file that would callable by layer. my brain is sort of shorting out here on how you can get eight 8 voice instrument definitions configurable from Unity with separate parameters. the biggest issue is that in PD i had a message with a hierarchy and used [route] objects to filter messages to destinations like ’ ', but in this situation i’m kind of lost on how the equivalent would work in Csound. and i think the examples in the project will most likely show a couple of simple parameters passed. technically i’d need a system or hierarchy of parameters.

all my note and instrument assignment data is put into a json file. here’s the layout for one 8 x 8 layer. this is just instrument and row assignment, not a grid of selected notes. keep in mind the notes keep shifting (rotating), so i wanted things separate.

“LayerObject0”:

{“InstNumber”:0,“SeqLength”:21,“BeatDivision”:2,“NoteList”:[12,17,19,24,26,31,34,36],“PosIntensity”:[12,17,19,24,26,31,34,36]}

NoteList is the pitch assigned to a horizontal row, from bottom to top. PosIntensity is velocity at any given position in the row. It’s also sort of meant to double as an option to send a value to control a parameter like a filter or ring mod effect.

so if you’ve got any ideas on how one could create a template CSD file to convey this info, that would be a great start. the sticking points for me are

- the instancing for voices (rows) for a single instrument

- if you were to dump the whole thing into one file (all 8 layers i mean) can the instrument itself and the layer settings be instanced, or would these all need separate defs?

lots of questions. i’m just beginning this, but i think you have a clearer idea of what i’m doing anyway.